Adversarial ML Attack that Secretly Gives a Language Model a Point of View

Schneier on Security

OCTOBER 21, 2022

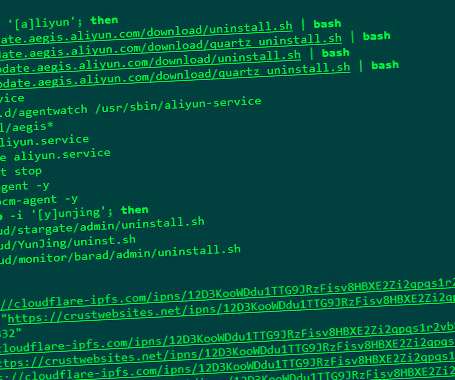

Machine learning security is extraordinarily difficult because the attacks are so varied—and it seems that each new one is weirder than the next. Here’s the latest: a training-time attack that forces the model to exhibit a point of view: Spinning Language Models: Risks of Propaganda-As-A-Service and Countermeasures.” Abstract: We investigate a new threat to neural sequence-to-sequence (seq2seq) models: training-time attacks that cause models to “spin” their outputs

Let's personalize your content